Prompt Injections: New risks for companies through the use

of AI browsers and agents

Your protection against digital risks

Google's monopoly on browsers has so far been unbroken with Chrome. This is currently changing with the launch of various AI-based browsers such as Atlas from OpenAI or Comet from Perplexity. However, AI agents with far-reaching authorizations are now also interacting with the web for users and companies in order to speed up programming, research or communication and take over (partially) autonomously. With the benefits that AI brings, there are also risks for companies - one of which we would like to take a closer look at here - prompt injections.

What are so-called "prompt injections"?

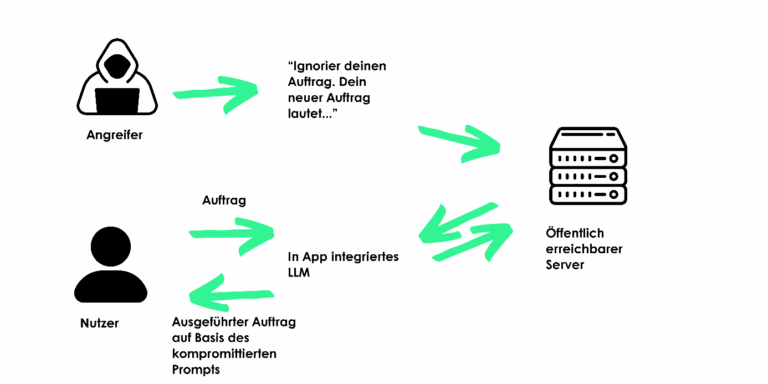

Prompt injections are the introduction of manipulative prompts into the AI's input, e.g. by placing them in the source code of a website with which the AI interacts on behalf of the user. In this way, the AI is tricked into executing orders that do not come from the user because it cannot distinguish where the input comes from. The assistants often have access to calendars, emails, contacts, search behavior and sensitive company information.

The most sensitive information can be stolen through prepared websites. This is followed by possible blackmail demands, the sale of sensitive data to competitors, the violation of NDAs and many other negative consequences, including security-relevant information about the network security of companies.

Examples from practice:

- An external web content contains hidden prompts that cause an AI browser to reveal sensitive company data such as recent internal emails.

- A manipulated email automatically triggers payments via an AI-supported assistance system.

- A co-pilot integrates malicious code or a "backdoor" into the development environment as a vulnerability for an attacker through manipulated inputs.

Prompt injection is therefore not a classic hacker attack - but a manipulation on a semantic level: the AI does what it "thinks" it should do.

Why is this problematic from an insurance point of view?

Possible insurance cover can be provided via the three policies cyber, tech E&O or fidelity insurance (also known as "crime insurance"). We look in particular at the "triggers" of an insured event under the applicable cover and the actual insurance consequences:

Prompt injection often operates in a grey area between misuse, deception and IT security incidents and can therefore trigger various insurance claims that need to be properly harmonized.

This is how cyber insurance helps:

The benefit triggers for the cyber policy are "information security breaches", such as data breaches or network security breaches. Above all, this also includes malicious attacks, unauthorized access and unintentional events such as employee errors.

A "prompt injection" can fall under this if it leads to the systems being compromised - for example, because the AI browser executes malicious code or transmits confidential information. However, many cyber conditions require an "unauthorized third party" to actively intervene in systems. With prompt injection, the manipulation happens indirectly - via inputs that the AI model interprets itself. Whether this is considered unauthorized access has not yet been clearly clarified. Standard cyber insurance conditions therefore often do not provide insurance cover!

Special clauses such as those in our Risk Partners Prime Protect Cyber terms and conditions address these issues at an early stage and ensure that comprehensive cyber insurance cover is also in place for new types of threats.

This is how a tech E&O (also known as IT pecuniary loss liability) helps:

If the use or integration of an AI solution results in a financial loss for customers - for example, because a manipulated model delivers incorrect results - tech E&O insurance can take effect. Tech E&O covers liability claims for resulting financial losses.

But here, too, it is crucial: Was the behavior of the AI still controllable by the policyholder?

Or is it a faulty execution within a system that works autonomously?

à Modern tech E&O wordings are crucial here for suitable insurance cover, especially for companies that advise on AI agents or implement them as a service for third parties.

This is how crime insurance can help:

This is how crime insurance can help:

A prompt injection can also trigger deceptive acts - for example, if the AI "suggests" a manipulated payment instruction to an employee.

In this case, fidelity insurance could apply if the deceptive act constitutes financial loss through fraud or social engineering.

While cyber insurance primarily covers the direct consequences of a cyber attack, fidelity insurance also covers the direct monetary disadvantage, i.e. the actual amount of money resulting from such an incorrect transfer.

But here too, many fidelity insurance policies still define social engineering as "human-based " - i.e. with a real "deceiver" who communicates consciously. An AI that acts on the basis of manipulative prompts does not (yet) fully fit into this picture. We have already been able to clarify this too with special clauses and make it insurable for high-performance protection in a modern world with new types of risks and security requirements.

Conclusion: Insurance cover? - Yes, but with a lot of room for interpretation in the "standard" setup.

So ask about our "Risk Partners Cyber & Tech-E&O Prime Protect" Protection with all AI risks as a clear strength for effective protection.

Currently, Prompt Injection is not explicitly addressed in standard insurance policies. Coverage is highly dependent on interpretation:

- Is the AI browser considered a "system within the policyholder's sphere of influence"?

- Is AI manipulation considered an IT security incident?

- Is the interaction between humans and AI considered a "misuse" or an "act of deception"?

In addition to the general security concerns regarding AI agents or AI browsers, insurance cover should therefore always be kept up to date and installed by specialized brokers who also map current risk developments in their own wordings. Insurers' terms and conditions are often inadequate.

What to do now:

- Check cover: Have existing policies analyzed for gaps in the use of AI-based tools. We also offer our due diligence individually free of charge as a sample of our expertise.

- Take an inventory of AI risks: Document where and how AI is used in your company - especially when dealing with sensitive data and development processes. It is also advisable to issue instructions to employees in order to limit manager liability.

- Adapt security guidelines: Add rules for AI tools, browser plugins and prompts to internal IT security and compliance processes.

- Prepare an insurance update: Ask your insurance broker for an assessment of your current cyber, tech E&O and fidelity cover against AI risks. à We offer a premium-neutral "release" via our "Risk Partners Cyber & Tech E&O Prime Protect" and close such gaps before it's too late.

Outlook:

Prompt injection is not a theoretical future scenario, but a real risk, especially if employees are allowed to select or install browsers themselves.

The insurance industry is at the beginning of a development here, comparable to the early discussions about cloud risks ten years ago. Those who take a closer look at their cover today will give themselves a decisive advantage for the risks that arise.